Back

An AI Assistant for TikTok Trust & Safety Product

This project tells a 0 to 1 exploration of how AI can assist - not replace - human judgment in high-risk, time-sensitive moderation workflows

Overview

MoLA is designed for moderators and aims to support risk judgment rather than replace human decisions, particularly in cases where accuracy, accountability, and time pressure coexist.

Since the launch of MoLA, it showed a positive impact on moderation quality, with results exceeding initial expectations.

background

Defining how AI should exist in the product

As part of a broader initiative, the engineering team had already identified AI as a potential lever to support moderators in their daily work.However, there was no clear consensus on how AI should be applied, nor what form it should take within the moderation workflow.

This ambiguity created an opportunity for a design led exploration to define not just an AI feature, but the role AI should play in the product.

Problem

Moderators are not afraid of complex cases, they are afraid of making the wrong decision under time pressure.

Our primary users are moderators responsible for evaluating user-generated videos under strict operational constraints.

Moderators operate under time-based KPIs, where failing to complete a case quickly can impact performance metrics. At the same time, incorrect judgments can compromise platform safety, creating constant tension between speed and accuracy.

View case

Identify

Next case

Selected violated policy

Analysis

Search

For searching behavior

5

information search platform

1.Personal doc for case collection

2.Lark chat history for reference

3.Team official training material

4.[External] Google

5.[External] GPT

and more

GOAL

How might we use AI capability to assist moderators reduce the time cost and increase the moderation accuracy during the moderation process?

Product Structure Exploration

First of all, we explored how AI exists in terms of its product structure

Option 1

Fully Automated Decision-Making

Where AI directly determined violations - was considered early on.

Automating moderation decisions promised maximum efficiency gains once model is accurate enough

Extra effort verifying or correcting AI decisions due to current trained AI model, time wasted

Reviewers would need to spend extra effort verifying or correcting AI decisions, offsetting any theoretical efficiency gains

Option 2

Chatbot-style, On-demand AI Assistant

A chatbot where moderators could ask AI questions as needed.

Image search

Translate

More

Ask me anything here or use @ for specific tool

AI Assistant

Conversational AI is a familiar and flexible interaction model for accessing information

Prompt-based interaction adds additional steps to each case review which may cause 5–10 second delay per case

Searching for information is an active behavior. Under KPI pressure, moderators were unlikely to consistently engage with the tool, leading to underutilization

Option 3

Final Selection

Context-aware, Auto-triggered Assistance

A fixed and repetitive nature of moderation tasks.

AI Assistant

CASE · 4/10

kabeh podo golek vidio anak baju biru aku dewe yow gk nemu...

Evidence 1

Evidence 2

Evidence 3

AI surfaces factual signals and policy references, while final judgment remains with the moderators.

Context from multiple systems is consolidated into a single view, eliminating manual searching.

No prompt writing, no mode switching, and no required interaction.

How AI generations Exist Visually

Once we decided defined AI as a context-aware, auto-triggered assistance, we still need to find out how to place those context since it has a lot based on our research to our user

Option 1

Modal-based AI Assistant (On-demand)

AI insights were displayed in a modal dialog triggered by user action

Moderation assistant

Your personal moderation assistant

Video risk · 4

Risk trend

Similar case

Case bank

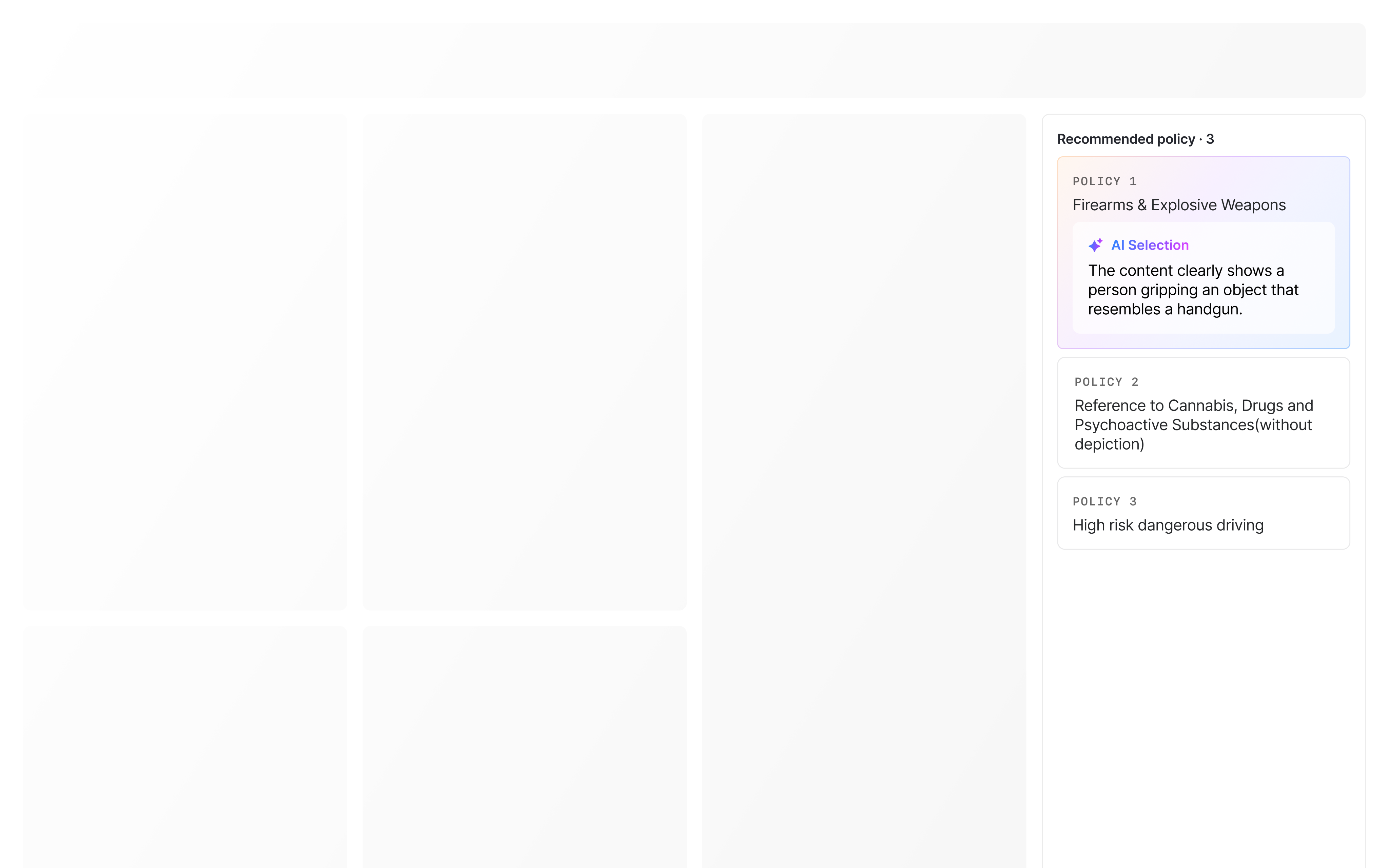

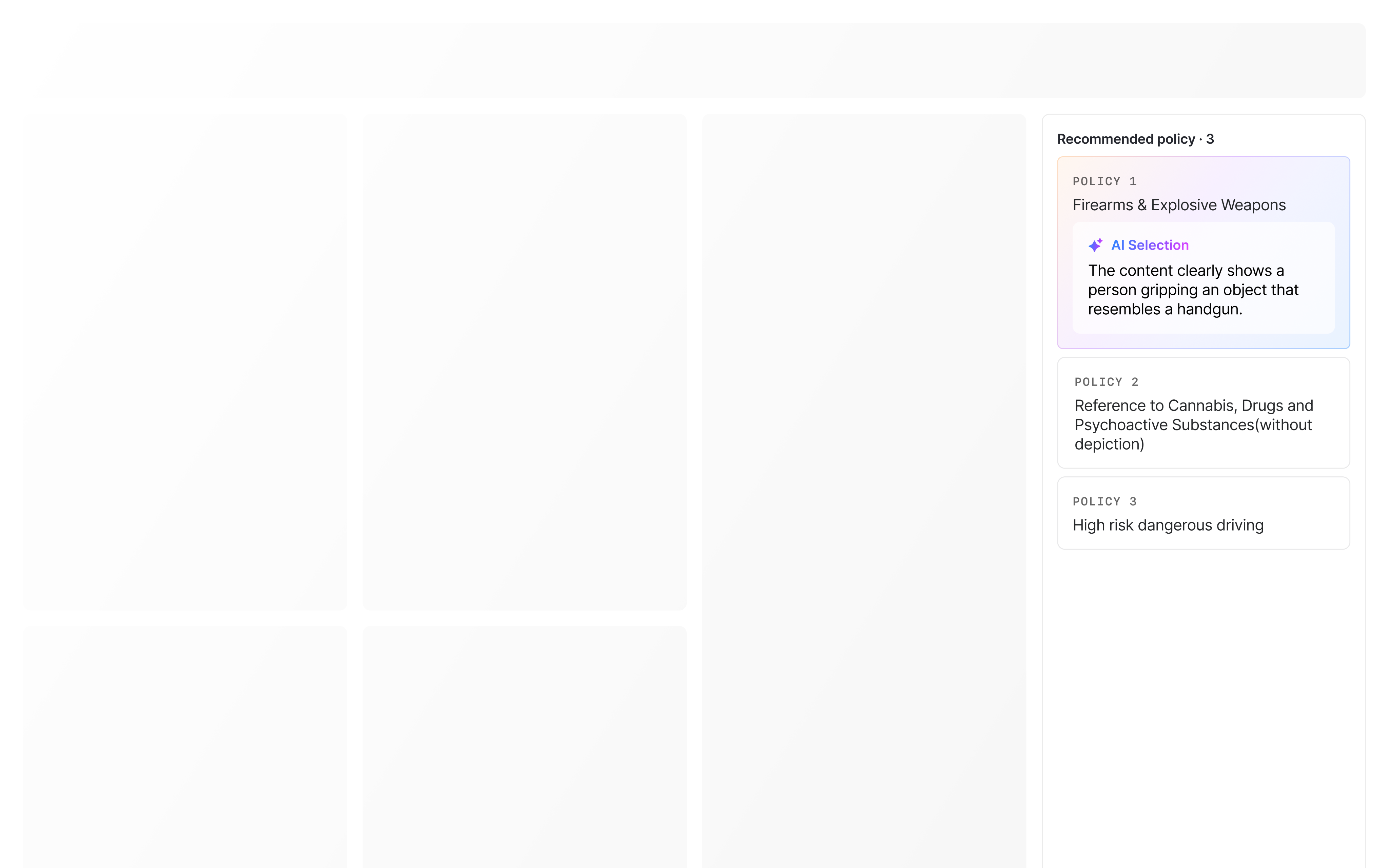

Recommended policy

The modal blocked access to the moderation interface, preventing simultaneous reference.

Repeated open/close actions added unnecessary operational overhead.

Option 2

Embedded Side Panel

The AI assistant was embedded as a right-side panel within the moderation interface

AI Assistant

Video risk · 4

Risk trend

Similar case

Case bank

Recommended policy

Short interaction path

Aligned with user intuition

Limited space constrained information visibility

Required frequent scrolling or expansion to access full context

Option 3

Final Selection

Independent AI Window

An independent, draggable window launched from the moderation platform

Decoupled from the core interface, allowing reviewers to position it freely

More than 95% of the moderators has two-monitors working environment which preserved cognitive continuity during complex decisions

Sufficient space to display comprehensive information

Longer interaction path than a side panel

Final design

AI is automatically triggered when a new case is opened

Minimum one and only click through out all moderation process

AI generated information will updated automatically once moderators switch to next case

Additional steps are added into the workflow only if necessary

It replaces cross-system information searching, we provide all in one information search feature

Hover to show more actions

Visual hint for Ask MoLA interaction

Search result display

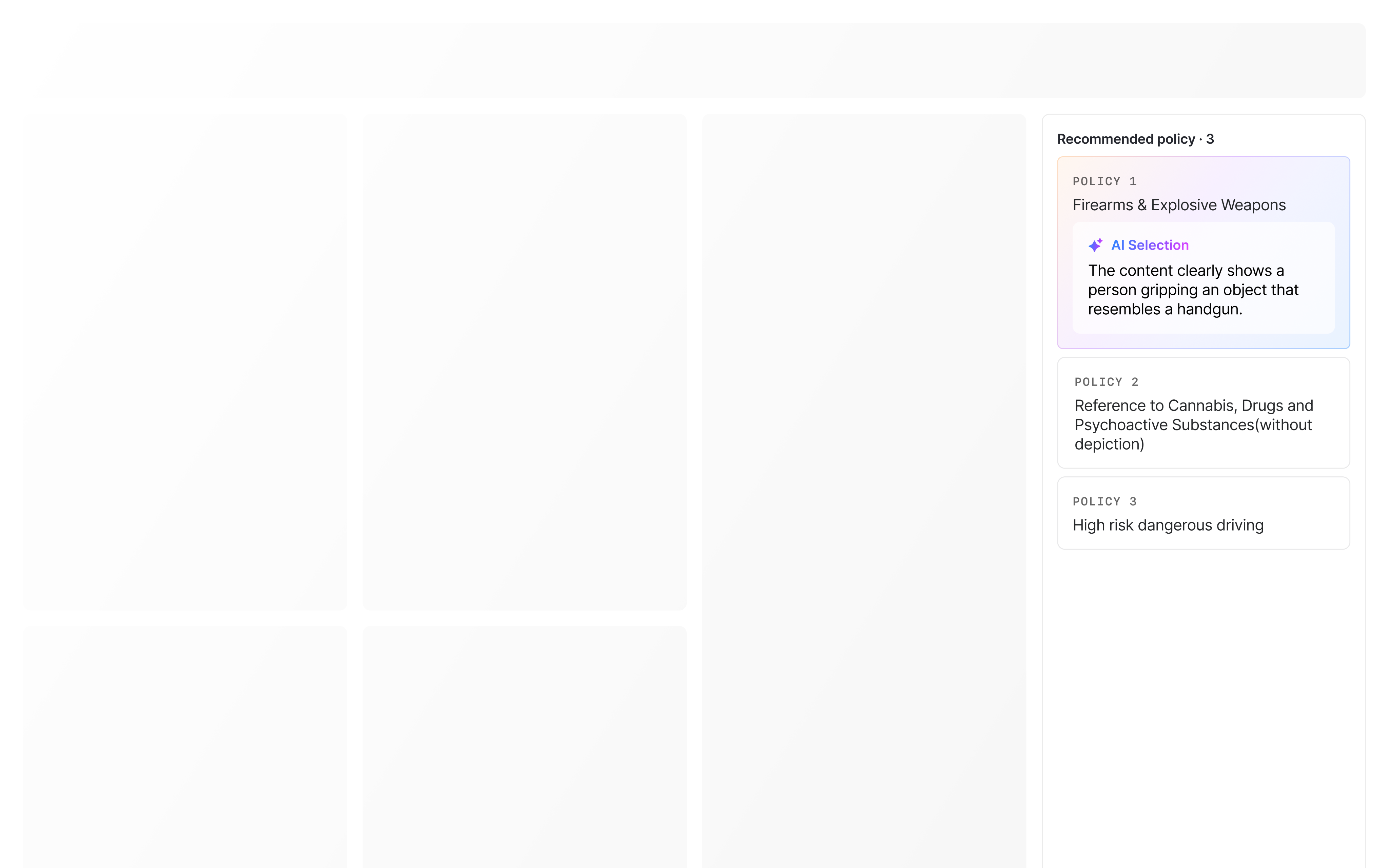

MVP result

The MVP showed a positive impact on moderation quality (+1.27%), with results exceeding initial expectations.

Efficiency metrics (AHT) remained largely unchanged compared to the baseline (+0.58 seconds). Given the project’s 0 → 1 stage and the launch of a non-traditional AI assistant, this outcome was considered acceptable.

User Feedback Hightlights

Big Cognitive Load

Some moderators felt that opening and managing a second window added cognitive overhead, occasionally increasing distraction rather than reducing effort—especially in cases requiring deep focus.

High Information Density

Moderators noted that policy suggestions were sometimes less accurate than existing tools, and that displaying too much information at once made it harder to quickly identify relevant policies.

Reflection

Is the Independent Window the Right Container for AI Context?

The independent window effectively addressed information visibility and space constraints during early exploration. However, user feedback suggested that in certain high-focus scenarios, managing a separate window could introduce additional cognitive load.

This raised an important question for future iterations:

Should AI context always live in a parallel workspace, or should its presence adapt dynamically based on task complexity and moderators’ experience?

How Should Policy Information Be Structured

Feedback around policy cards highlighted a tension between completeness and usability. While comprehensive policy coverage is valuable, presenting all information simultaneously can overwhelm reviewers and slow down decision-making.

This led to a key learning:

In AI-assisted moderation, progressive disclosure may be more effective than full transparency.

Back

An AI Assistant for TikTok Trust & Safety Product

This project tells a 0 to 1 exploration of how AI can assist - not replace - human judgment in high-risk, time-sensitive moderation workflows

Overview

MoLA is designed for moderators and aims to support risk judgment rather than replace human decisions, particularly in cases where accuracy, accountability, and time pressure coexist.

Since the launch of MoLA, it showed a positive impact on moderation quality, with results exceeding initial expectations.

background

Defining how AI should exist in the product

As part of a broader initiative, the engineering team had already identified AI as a potential lever to support moderators in their daily work.However, there was no clear consensus on how AI should be applied, nor what form it should take within the moderation workflow.

This ambiguity created an opportunity for a design led exploration to define not just an AI feature, but the role AI should play in the product.

Problem

Moderators are not afraid of complex cases, they are afraid of making the wrong decision under time pressure.

Our primary users are moderators responsible for evaluating user-generated videos under strict operational constraints.

Moderators operate under time-based KPIs, where failing to complete a case quickly can impact performance metrics. At the same time, incorrect judgments can compromise platform safety, creating constant tension between speed and accuracy.

View case

Identify

Next case

Selected violated policy

Analysis

Search

For searching behavior

5

information search platform

1.Personal doc for case collection

2.Lark chat history for reference

3.Team official training material

4.[External] Google

5.[External] GPT

and more

GOAL

How might we use AI capability to assist moderators reduce the time cost and increase the moderation accuracy during the moderation process?

Product Structure Exploration

First of all, we explored how AI exists in terms of its product structure

Option 1

Fully Automated Decision-Making

Where AI directly determined violations - was considered early on.

Automating moderation decisions promised maximum efficiency gains once model is accurate enough

Extra effort verifying or correcting AI decisions due to current trained AI model, time wasted

Reviewers would need to spend extra effort verifying or correcting AI decisions, offsetting any theoretical efficiency gains

Option 2

Chatbot-style, On-demand AI Assistant

A chatbot where moderators could ask AI questions as needed.

Image search

Translate

More

Ask me anything here or use @ for specific tool

AI Assistant

Conversational AI is a familiar and flexible interaction model for accessing information

Prompt-based interaction adds additional steps to each case review which may cause 5–10 second delay per case

Searching for information is an active behavior. Under KPI pressure, moderators were unlikely to consistently engage with the tool, leading to underutilization

Option 3

Final Selection

Context-aware, Auto-triggered Assistance

A fixed and repetitive nature of moderation tasks.

AI Assistant

CASE · 4/10

kabeh podo golek vidio anak baju biru aku dewe yow gk nemu...

Evidence 1

Evidence 2

Evidence 3

AI surfaces factual signals and policy references, while final judgment remains with the moderators.

Context from multiple systems is consolidated into a single view, eliminating manual searching.

No prompt writing, no mode switching, and no required interaction.

How AI generations Exist Visually

Once we decided defined AI as a context-aware, auto-triggered assistance, we still need to find out how to place those context since it has a lot based on our research to our user

Option 1

Modal-based AI Assistant (On-demand)

AI insights were displayed in a modal dialog triggered by user action

Moderation assistant

Your personal moderation assistant

Video risk · 4

Risk trend

Case bank

Recommended policy

Moderation assistant

Your personal moderation assistant

Video risk · 4

Risk trend

Case bank

Recommended policy

The modal blocked access to the moderation interface, preventing simultaneous reference.

Repeated open/close actions added unnecessary operational overhead.

Option 2

Embedded Side Panel

The AI assistant was embedded as a right-side panel within the moderation interface

AI Assistant

Video risk · 4

Risk trend

Similar case

Case bank

Recommended policy

Short interaction path

Aligned with user intuition

Limited space constrained information visibility

Required frequent scrolling or expansion to access full context

Option 3

Final Selection

Independent AI Window

An independent, draggable window launched from the moderation platform

Decoupled from the core interface, allowing reviewers to position it freely

More than 95% of the moderators has two-monitors working environment which preserved cognitive continuity during complex decisions

Sufficient space to display comprehensive information

Longer interaction path than a side panel

Final design

AI is automatically triggered when a new case is opened

Minimum one and only click through out all moderation process

AI generated information will updated automatically once moderators switch to next case

Additional steps are added into the workflow only if necessary

It replaces cross-system information searching, we provide all in one information search feature

Hover to show more actions

Visual hint for Ask MoLA interaction

Search result display

MVP result

The MVP showed a positive impact on moderation quality (+1.27%), with results exceeding initial expectations.

Efficiency metrics (AHT) remained largely unchanged compared to the baseline (+0.58 seconds). Given the project’s 0 → 1 stage and the launch of a non-traditional AI assistant, this outcome was considered acceptable.

User Feedback Hightlights

Big Cognitive Load

Some moderators felt that opening and managing a second window added cognitive overhead, occasionally increasing distraction rather than reducing effort—especially in cases requiring deep focus.

High Information Density

Moderators noted that policy suggestions were sometimes less accurate than existing tools, and that displaying too much information at once made it harder to quickly identify relevant policies.

Reflection

Is the Independent Window the Right Container for AI Context?

The independent window effectively addressed information visibility and space constraints during early exploration. However, user feedback suggested that in certain high-focus scenarios, managing a separate window could introduce additional cognitive load.

This raised an important question for future iterations:

Should AI context always live in a parallel workspace, or should its presence adapt dynamically based on task complexity and moderators’ experience?

How Should Policy Information Be Structured

Feedback around policy cards highlighted a tension between completeness and usability. While comprehensive policy coverage is valuable, presenting all information simultaneously can overwhelm reviewers and slow down decision-making.

This led to a key learning:

In AI-assisted moderation, progressive disclosure may be more effective than full transparency.

Back

An AI Assistant for TikTok Trust & Safety Product

This project tells a 0 to 1 exploration of how AI can assist - not replace - human judgment in high-risk, time-sensitive moderation workflows

Overview

MoLA is designed for moderators and aims to support risk judgment rather than replace human decisions, particularly in cases where accuracy, accountability, and time pressure coexist.

Since the launch of MoLA, it showed a positive impact on moderation quality, with results exceeding initial expectations.

background

Defining how AI should exist in the product

As part of a broader initiative, the engineering team had already identified AI as a potential lever to support moderators in their daily work. However, there was no clear conclusion on how AI should be applied, nor what form it should take within the moderation workflow.

This ambiguity created an opportunity for a design led exploration to define not just an AI feature, but the role AI should play in the product.

Problem

Moderators are not afraid of complex cases, they are afraid of making the wrong decision under time pressure.

Our primary users are moderators responsible for evaluating user-generated videos under strict operational constraints.

Moderators operate under time-based KPIs, where failing to complete a case quickly can impact performance metrics. At the same time, incorrect judgments can compromise platform safety, creating constant tension between speed and accuracy.

View case

Identify

Next case

Selected violated policy

Analysis

Search

For searching behavior

5

information search platform

1.Personal doc for case collection

2.Lark chat history for reference

3.Team official training material

4.[External] Google

5.[External] GPT

and more

GOAL

How might we use AI capability to assist moderators reduce the time cost and increase the moderation accuracy during the moderation process?

Product Structure Exploration

First of all, we explored how AI exists in terms of its product structure

Option 1

Fully Automated Decision-Making

Where AI directly determined violations - was considered early on.

Automating moderation decisions promised maximum efficiency gains once model is accurate enough

Extra effort verifying or correcting AI decisions due to current trained AI model, time wasted

Vague decision accountability between AI and moderators if AI makes the final decision

Image search

Translate

More

Ask me anything here or use @ for specific tool

AI Assistant

Option 2

Chatbot-style, On-demand AI Assistant

A chatbot where moderators could ask AI questions as needed.

Conversational AI is a familiar and flexible interaction model for accessing information

Prompt-based interaction adds additional steps to each case review which may cause 5–10 second delay per case

Searching for information is an active behavior. Under KPI pressure, moderators were unlikely to consistently engage with the tool, leading to underutilization

AI Assistant

CASE · 4/10

kabeh podo golek vidio anak baju biru aku dewe yow gk nemu...

Evidence 1

Evidence 2

Evidence 3

Option 3

Final Selection

Context-aware, Auto-triggered Assistance

A fixed and repetitive nature of moderation tasks.

AI surfaces factual signals and policy references, while final judgment remains with the moderators.

Context from multiple systems is consolidated into a single view, eliminating manual searching.

No prompt writing, no mode switching, and no required interaction.

How AI generations Exist Visually

Once we decided defined AI as a context-aware, auto-triggered assistance, we still need to find out how to place those context since it has a lot based on our research to our user

Moderation assistant

Your personal moderation assistant

Video risk · 4

Risk trend

Similar case

Case bank

Recommended policy

Option 1

Modal-based AI Assistant (On-demand)

AI insights were displayed in a modal dialog triggered by user action

The modal blocked access to the moderation interface, preventing simultaneous reference.

Repeated open/close actions added unnecessary operational overhead.

AI Assistant

Video risk · 4

Risk trend

Similar case

Case bank

Recommended policy

Option 2

Embedded Side Panel

The AI assistant was embedded as a right-side panel within the moderation interface

Short interaction path

Aligned with user intuition

Limited space constrained information visibility

Required frequent scrolling or expansion to access full context

Option 3

Final Selection

Independent AI Window

An independent, draggable window launched from the moderation platform

Decoupled from the core interface, allowing reviewers to position it freely

More than 95% of the moderators has two-monitors working environment which preserved cognitive continuity during complex decisions

Sufficient space to display comprehensive information

Longer interaction path than a side panel

Final design

AI is automatically triggered when a new case is opened

Minimum one and only click through out all moderation process

AI generated information will updated automatically once moderators switch to next case

Additional steps are added into the workflow only if necessary

It replaces cross-system information searching, we provide all in one information search feature

Hover to show more actions

Visual hint for Ask MoLA interaction

Search result display

MVP result

The MVP showed a positive impact on moderation quality (+1.27%), with results exceeding initial expectations.

Efficiency metrics (AHT) remained largely unchanged compared to the baseline (+0.58 seconds). Given the project’s 0 → 1 stage and the launch of a non-traditional AI assistant, this outcome was considered acceptable.

User Feedback Hightlights

Big Cognitive Load

Some moderators felt that opening and managing a second window added cognitive overhead, occasionally increasing distraction rather than reducing effort—especially in cases requiring deep focus.

High Information Density

Moderators noted that policy suggestions were sometimes less accurate than existing tools, and that displaying too much information at once made it harder to quickly identify relevant policies.

Reflection

Is the Independent Window the Right Container for AI Context?

The independent window effectively addressed information visibility and space constraints during early exploration. However, user feedback suggested that in certain high-focus scenarios, managing a separate window could introduce additional cognitive load.

This raised an important question for future iterations:

Should AI context always live in a parallel workspace, or should its presence adapt dynamically based on task complexity and moderators’ experience?

How Should Policy Information Be Structured

Feedback around policy cards highlighted a tension between completeness and usability. While comprehensive policy coverage is valuable, presenting all information simultaneously can overwhelm reviewers and slow down decision-making.

This led to a key learning:

In AI-assisted moderation, progressive disclosure may be more effective than full transparency.